Market Snapshot

Key Takeaways

Market Overview & Analysis

Report Summary

The small language model for edge deployment market encompasses the development, optimization, and commercialization of compact AI language models (typically 100 million to 13 billion parameters) designed to perform inference directly on edge devices and resource-constrained environments. These models deliver fast inference, enhanced privacy, and low latency by minimizing or eliminating reliance on centralized cloud servers. The market spans model developers (including hyperscalers and open-source communities), semiconductor companies providing AI-optimized silicon, software framework providers enabling on-device deployment, and system integrators packaging edge AI solutions for vertical applications.

The current state of the market reflects a dramatic shift from the “bigger is better” paradigm that dominated AI development through 2023. By early 2026, the industry consensus has crystallized: carefully engineered small models, trained on curated synthetic data and distilled from larger teachers, can match or exceed the performance of models ten times their size on targeted tasks. Microsoft’s Phi-4 family demonstrated this conclusively, with the 14-billion-parameter Phi-4-reasoning model approaching DeepSeek R1 (671 billion parameters) on mathematical reasoning benchmarks. Google’s Gemma 3 270M variant, at just 270 million parameters, established new benchmarks for instruction-following in ultra-compact form factors, consuming only 0.75% of a Pixel 9 Pro’s battery for 25 conversations. This performance-at-scale breakthrough has unlocked deployment scenarios—from wearables to industrial sensors—that were previously uneconomical.

The market is further catalyzed by the maturation of deployment frameworks. Meta’s ExecuTorch reached 1.0 general availability in October 2025, supporting 12+ hardware backends with a 50KB runtime footprint. Combined with llama.cpp for CPU inference and Apple’s MLX for Apple Silicon optimization, developers now have production-grade toolchains for every major edge platform. This ecosystem maturity, paired with the explosive growth of AI-capable edge hardware shipping an estimated 2.3 billion units in 2024 with projections reaching 6 billion by 2030, is creating a self-reinforcing adoption cycle.

Market Dynamics

Key Drivers

- Escalating data privacy regulations and enterprise security requirements: Regulations such as GDPR in Europe, CCPA in California, and India’s Digital Personal Data Protection Act are compelling enterprises to process sensitive data locally. SLMs deployed on-device ensure that user data never traverses external networks, making them essential for healthcare (HIPAA compliance), financial services, and government applications. On-device processing eliminates exposure to data breaches during transit and reduces the compliance burden associated with cross-border data transfers.

- Demand for ultra-low-latency, real-time AI inference: Applications in autonomous driving, industrial robotics, augmented reality, and real-time language translation require sub-50-millisecond response times that cloud round-trips cannot guarantee. SLMs running on edge NPUs deliver inference latency as low as 32 milliseconds on mobile-class hardware, enabling mission-critical use cases where even brief delays can have safety or economic consequences.

- Dramatic reduction in total cost of ownership (TCO): Early adopters report 4x or greater cost reductions when deploying SLMs compared to cloud-hosted LLM API calls. A mid-sized e-commerce retailer processing 200,000 monthly customer interactions using a hybrid Mistral 7B plus cloud LLM architecture routes 95% of queries to the on-device SLM, reserving expensive cloud calls for only the 5% requiring broad knowledge. For enterprises processing millions of daily inference requests, the shift from per-API-call pricing to fixed-cost on-device deployment fundamentally alters AI economics.

- Proliferation of AI-capable edge hardware: The rapid deployment of NPUs across consumer and industrial devices is creating a massive installed base for SLM inference. Qualcomm’s Snapdragon 8 Elite delivers 45+ TOPS of AI performance, Apple’s M4 chip includes a 16-core Neural Engine at 38 TOPS, and NVIDIA’s Jetson platform supports production-grade edge inference for industrial applications. By 2025, an estimated 75% of enterprise-generated data is processed outside traditional centralized data centers.

- Offline and connectivity-constrained deployment needs: Military, maritime, rural healthcare, mining, and agricultural applications often lack reliable internet connectivity. SLMs provide full AI functionality in completely disconnected environments, serving populations and industries that cloud-only AI cannot reach.

Key Restraints

- Knowledge breadth limitations: SLMs inherently trade knowledge breadth for inference efficiency. Models under 3 billion parameters struggle with open-domain question answering, complex multi-step reasoning across diverse topics, and nuanced multilingual generation. This constrains their standalone applicability for general-purpose virtual assistant roles requiring encyclopedic knowledge.

- Hardware fragmentation and optimization complexity: The diversity of edge hardware—spanning Arm CPUs, Qualcomm Hexagon NPUs, Apple Neural Engine, NVIDIA CUDA GPUs, MediaTek APUs, and RISC-V processors—creates significant optimization overhead. Developers must maintain multiple quantized model variants, each tuned for specific hardware acceleration paths, increasing development cost and time-to-market.

- Thermal and power constraints limiting sustained inference: Unlike data center GPUs operating at 700W+ with active liquid cooling, mobile and IoT edge devices operate within 5–15W power envelopes. Sustained SLM inference can trigger thermal throttling on smartphones and wearables, degrading performance and draining batteries. This limits the duration and intensity of on-device AI interactions.

- Shortage of specialized AI engineering talent: Deploying, fine-tuning, and maintaining SLMs on edge hardware requires niche expertise spanning model compression techniques, hardware-specific optimization, and edge ML operations (MLOps). The global shortage of professionals with this combined skill set creates bottlenecks for enterprise adoption.

Key Trends

- Hybrid SLM-LLM orchestration architectures: The industry is converging on architectures where lightweight edge models handle routine inference while automatically escalating complex queries to cloud-hosted LLMs. This “small-at-edge, large-in-cloud” pattern optimizes for cost, latency, and capability simultaneously. Automatic routing based on query complexity is being built into mainstream frameworks.

- Multimodal SLMs processing text, vision, and speech simultaneously: The Phi-4-multimodal (5.6 billion parameters) and Gemma 3n models process text, images, video, and audio in a unified architecture on-device. This enables context-aware applications—from real-time visual question answering to on-device speech translation—without sending any data to the cloud.

- Reasoning-optimized compact models rivaling frontier LLMs: Distillation techniques using chain-of-thought traces from teacher models like o3-mini and DeepSeek-R1 are producing SLMs with extraordinary reasoning capabilities. Microsoft’s Phi-4-reasoning-plus (14 billion parameters) outperforms OpenAI o1-mini on multiple math and science benchmarks, demonstrating that inference-time compute techniques can substitute for raw parameter scale.

- On-device fine-tuning and personalization: Techniques like QLoRA (4-bit base model with higher-precision adapters) and LoRA enable domain-specific customization of SLMs directly on edge devices. This allows models to adapt to individual user preferences, enterprise-specific terminology, or regional language patterns without transmitting training data off-device.

Market Segmentation

The sub-3B parameter segment represents the fastest-growing category, driven by deployment on smartphones, wearables, and IoT devices with tight memory and power constraints. Models such as Meta’s Llama 3.2 1B (running at 20–30 tokens per second on iPhone 12+, fitting in 650MB RAM with 4-bit quantization), Google’s Gemma 3 270M, and Hugging Face’s SmolLM2 (135M–1.7B) demonstrate that sub-billion-parameter models can handle practical tasks including text classification, smart reply generation, on-device summarization, and structured data extraction with remarkable efficiency.

The 3–7B segment serves as the current sweet spot for enterprise edge deployment, balancing capability with resource efficiency. Microsoft’s Phi-4-mini (3.8 billion parameters with 128K token context window), Mistral 7B, and Alibaba’s Qwen 2.5 7B (supporting 29 languages) deliver production-grade performance for customer service automation, code generation, document analysis, and multilingual applications. This segment accounts for the largest revenue share in 2025 as it aligns with available hardware on high-end smartphones and edge servers.

The 7–13B segment targets high-performance edge servers, workstations, and industrial deployments where greater reasoning depth is required. Microsoft’s Phi-4 (14B parameters) and Phi-4-reasoning-vision-15B, Mistral’s NeMo 12B, and Google’s Gemma 3 12B models serve complex enterprise workflows including legal document analysis, medical diagnosis support, scientific research assistance, and advanced code review. These models require dedicated GPU or high-end NPU hardware but deliver near-frontier-model accuracy at a fraction of the cloud inference cost.

On-device deployment is the fastest-growing mode, running SLMs directly on end-user hardware such as smartphones, smartwatches, AR/VR headsets, and embedded IoT sensors. This mode provides the highest privacy guarantees and lowest latency, as data never leaves the device. Qualcomm’s Snapdragon platform, Apple’s Neural Engine, and MediaTek’s Dimensity NPUs are the primary hardware enablers. Key use cases include offline translation, voice assistants, on-device content moderation, and personalized recommendations.

Edge server deployment involves running SLMs on dedicated hardware within enterprise premises—factory floors, hospital data rooms, retail store back offices, or telecom base stations. This mode supports higher-parameter models (7–13B) with greater context windows and handles multi-user concurrent inference. NVIDIA Jetson, Intel’s Xeon with integrated AI accelerators, and custom FPGA solutions dominate this segment. Manufacturing quality inspection, real-time patient monitoring, and autonomous store operations are typical applications.

Hybrid deployment combines on-device or edge server SLMs with cloud-hosted LLMs through intelligent routing. The SLM handles routine, latency-sensitive, or privacy-critical queries locally, while complex reasoning tasks are routed to cloud LLMs. This architecture is gaining rapid enterprise traction as it optimizes the tradeoff between cost, capability, and data sovereignty. Framework-level support for hybrid routing is being integrated into platforms such as BentoML, vLLM, and Microsoft Azure AI Foundry.

Consumer electronics represent the largest end-use segment by volume, with SLMs powering on-device features across smartphones, smart home devices, PCs, and wearables. Apple Intelligence, Google’s on-device Gemma integrations, Samsung’s Galaxy AI features, and Microsoft’s Copilot+ PC experiences driven by Phi Silica collectively touch billions of daily active users. Key functions include smart compose, photo captioning, on-device search, voice command processing, and real-time language translation.

Healthcare is a high-growth vertical driven by regulatory requirements for patient data privacy (HIPAA, EU MDR) and the need for real-time clinical decision support at the point of care. SLMs deployed on medical devices and hospital edge servers enable offline diagnostic assistance, clinical note summarization, drug interaction checking, and medical imaging triage without transmitting protected health information externally. Specialized medical SLMs such as Google’s MedGemma are being developed specifically for health AI applications.

Industrial applications leverage SLMs on edge gateways and production line controllers for predictive maintenance, real-time quality inspection narration, natural-language equipment control, and safety alert generation. The integration of SLMs with computer vision models on NVIDIA Jetson platforms enables intelligent automation in environments where cloud connectivity is unreliable or latency-intolerant. Automotive parts manufacturers have deployed fine-tuned Phi-3 7B models on Jetson devices to process inspection reports in real time.

The automotive sector is integrating SLMs into in-vehicle infotainment, driver assistance narration, and fleet management systems. Cerence AI’s CaLLM Edge represents a specialized embedded SLM for automotive applications, developed in collaboration with NVIDIA to optimize performance and reduce costs for automakers deploying generative AI solutions. Autonomous vehicle stacks use edge SLMs for natural-language scene description and passenger interaction.

By Geography

North America

North America dominates the SLM for edge deployment market with approximately 35% revenue share in 2025, driven by the concentration of leading model developers (Microsoft, Google, Meta, Apple) and AI semiconductor firms (Qualcomm, NVIDIA, Intel, AMD) headquartered in the United States. The region benefits from mature enterprise AI adoption, robust venture capital funding (AI chip startups raised over USD 5.1 billion in H1 2025 alone), and advanced 5G infrastructure enabling distributed edge computing. The U.S. government’s emphasis on AI leadership and Canada’s growing AI research ecosystem further strengthen regional dominance. Key adoption verticals include financial services, healthcare, defense, and technology.

Europe

Europe holds approximately 22% market share, with adoption strongly influenced by the GDPR regulatory framework that incentivizes on-device data processing. The region’s Industry 4.0 manufacturing base in Germany, France, and the Nordics drives edge AI deployment in smart factories. Mistral AI, headquartered in Paris, has emerged as a globally competitive SLM developer with strong data sovereignty positioning. The European Union’s AI Act and Digital Europe Programme (including the LLMs4Europe initiative with 70+ collaborators building open, multilingual European language models) are accelerating sovereign AI development and local edge deployment.

Asia-Pacific

Asia-Pacific is the fastest-growing region with an estimated CAGR exceeding 34% through 2030, fueled by massive smartphone penetration, government AI sovereignty programs, and surging demand for multilingual on-device intelligence. China’s Interim Measures mandate on-shore model training, stimulating domestic SLM development from Alibaba (Qwen series), ByteDance, Baidu, and DeepSeek. India’s IndiaAI Mission provides GPU credits and open datasets to startups, while Japan’s Digital Garden strategy incentivizes high-impact AI. Alibaba’s Qwen 2.5 family supporting 29 languages is particularly well-positioned for the linguistically diverse APAC market. Edge SLMs resonate strongly in smartphone-centric markets like Indonesia and the Philippines.

Middle East and Africa

The Middle East is an emerging growth pocket, led by Saudi Arabia’s investments in AI infrastructure through initiatives such as Humain AI. The kingdom’s collaboration with Qualcomm on edge AI hardware and Arabic-language SLM optimization signals strategic commitment to regional AI capability. The UAE’s national AI strategy and growing smart city deployments provide additional tailwinds. Africa represents a nascent but high-potential market where offline-capable SLMs can address connectivity limitations in healthcare, agriculture, and education.

Latin America

Latin America is in the early stages of SLM edge adoption, with Brazil and Mexico leading. Growing digital transformation across financial services and retail, combined with expanding 5G rollout, is creating initial demand. The region’s interest in data sovereignty (influenced by Brazil’s LGPD privacy law) is beginning to drive on-premises and on-device AI deployment as an alternative to U.S.-hosted cloud inference.

How Competition Is Evolving

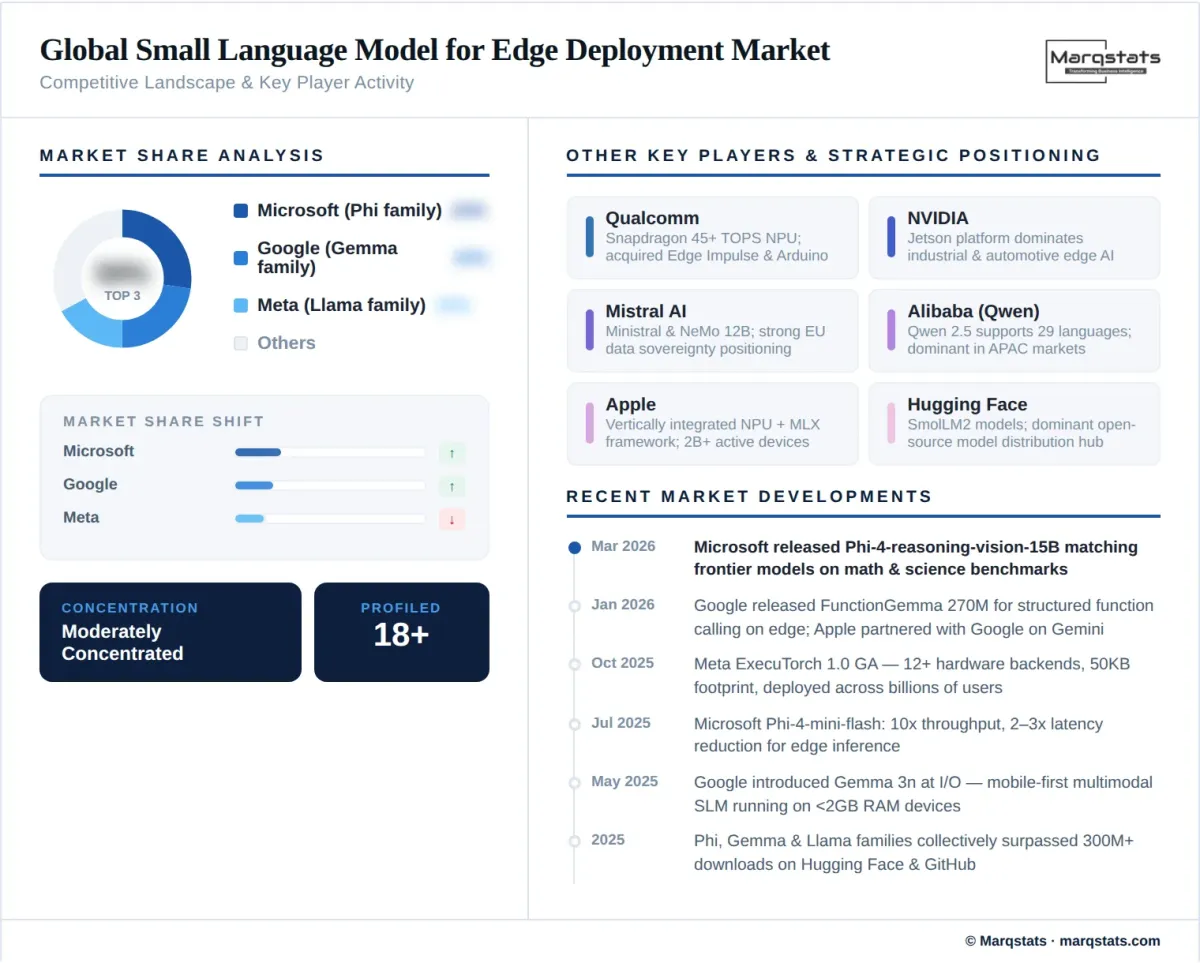

The SLM for edge deployment market is moderately concentrated at the model development layer, with a handful of hyperscalers and well-funded AI labs producing the most widely adopted base models, while the broader ecosystem of hardware enablers, framework developers, and vertical solution providers is highly fragmented. Microsoft, Google, and Meta collectively dominate the open-weight SLM landscape, with their Phi, Gemma, and Llama model families respectively surpassing 300 million cumulative downloads by early 2026. Mistral AI and Alibaba’s Qwen team represent significant competitive forces, particularly in European and Asian markets.

Competition is primarily structured around three strategic dimensions. First, model quality per parameter—the ability to maximize task performance within tight compute budgets through data curation, synthetic training, and distillation techniques. Microsoft’s Phi family has been the most visible champion of this approach, consistently demonstrating that 14B-parameter models can rival systems many times their size. Second, hardware ecosystem integration—model developers who partner closely with silicon vendors (as Microsoft does with Qualcomm for Copilot+ PCs and NVIDIA for Jetson) gain distribution advantages. Third, deployment framework maturity—Meta’s ExecuTorch, Google’s LiteRT (formerly TensorFlow Lite), and the community-driven llama.cpp project compete to be the default on-device inference runtime.

The semiconductor competitive landscape is equally dynamic. Qualcomm is executing a strategic shift from premium cellular hardware provider to full-stack edge AI platform provider, with acquisitions of Edge Impulse and Arduino strengthening its ecosystem. NVIDIA’s Jetson platform dominates industrial and automotive edge inference, while Apple’s vertically integrated approach (custom NPUs plus the MLX framework) creates a closed but highly optimized ecosystem for its 2+ billion active devices. At the startup level, companies specializing in model compression, quantization tooling, and vertical-specific SLM fine-tuning are attracting significant venture capital as the market matures.

Companies Covered

The report profiles 18+ companies with full strategy and financials analysis, including:

Recent Market Activity

Table of Contents

Coverage & Segmentation

This report provides a comprehensive analysis of the global small language model (SLM) for edge deployment market covering the historical period 2021–2025 and the forecast period 2026–2030, with 2025 as the base year. The study examines market size and revenue forecasts, growth trends, competitive landscape dynamics, segment-level analysis by model size, deployment mode, and end-use industry, and region-level forecasts across North America, Europe, Asia-Pacific, Middle East & Africa, and Latin America. The research methodology combines bottom-up market sizing derived from hardware shipment data, model download metrics, and enterprise deployment surveys, validated against top-down estimates using industry body reports, patent filings, venture capital investment data, and company financial disclosures.

Primary research includes structured interviews with AI engineering leads at hyperscaler companies, semiconductor product managers, enterprise CTO and CIO decision-makers deploying edge AI solutions, and independent AI researchers specializing in model compression and on-device inference optimization. Secondary sources include IEEE and ACM conference proceedings, MLCommons benchmark publications, Hugging Face download statistics, government AI investment program documentation, and financial filings from publicly traded participants across the value chain.