Market Snapshot

Key Takeaways

Market Overview & Analysis

Report Summary

GPU-as-a-Service (GPUaaS) refers to the cloud-based delivery of graphics processing unit (GPU) compute resources on demand, enabling enterprises, researchers, and developers to access high-performance parallel processing capabilities without investing in on-premises hardware. GPUaaS encompasses infrastructure-as-a-service (IaaS) GPU instances, platform-as-a-service (PaaS) managed AI environments, and software-as-a-service (SaaS) API endpoints that abstract GPU complexity behind RESTful interfaces. The service model has become indispensable for workloads including AI model training and inference, scientific simulation, financial modeling, real-time rendering, autonomous vehicle development, and drug discovery.

The global GPUaaS market has evolved from a niche offering within broader cloud computing into a distinct, high-growth segment commanding dedicated infrastructure investment. In 2024, total GPUaaS revenue reached approximately USD 21 billion according to industry estimates, with hyperscale cloud providers accounting for roughly 76% of that figure. However, the competitive dynamics are shifting rapidly as specialized neocloud providers—purpose-built for GPU-intensive AI workloads—capture an expanding share by offering bare-metal performance, lower latency, and pricing advantages of 30–50% relative to hyperscaler equivalents. The transition from AI experimentation to production-scale deployment across Fortune 500 enterprises is accelerating demand, with AI inferencing workloads projected to outpace training workloads over the forecast period as more organizations deploy AI solutions at scale.

The market encompasses a diverse ecosystem of providers ranging from global hyperscalers (AWS, Azure, Google Cloud, Oracle Cloud, IBM Cloud) to vertically specialized neoclouds (CoreWeave, Lambda Labs, Nebius, Crusoe Cloud, Vultr), hardware-led offerings (NVIDIA DGX Cloud), and telecoms operators entering the GPUaaS space. NVIDIA’s dominance across the GPU hardware stack—with its H100, H200, Blackwell (GB200/GB300), and forthcoming Rubin architectures—creates a technology axis around which the entire GPUaaS ecosystem revolves. The interplay between GPU supply constraints, exponentially growing AI compute demand, and evolving cloud delivery models defines the strategic landscape through 2030.

Market Dynamics

Key Drivers

- Exponential growth in AI and ML workloads: The proliferation of generative AI, large language models, and reasoning models is driving unprecedented demand for GPU compute. OpenAI’s multi-billion-dollar infrastructure contracts with CoreWeave (USD 22.4 billion total) exemplify the scale of compute procurement required for frontier AI development. Enterprise AI adoption is moving from pilot programs to production deployment, with AI inferencing demand growing faster than training demand.

- Cost optimization through pay-as-you-go models: GPUaaS eliminates the need for enterprises to invest USD 200,000–400,000 per high-end GPU server, instead offering consumption-based billing starting at approximately USD 0.66/hour for A100 instances and USD 4.00+/hour for H100 configurations. This shifts GPU spending from capital expenditure to operational expenditure, enabling organizations to scale compute resources dynamically based on workload intensity.

- Government-led sovereign AI infrastructure investment: National governments are treating GPU compute as critical infrastructure. France has committed EUR 109 billion to AI infrastructure under its France 2030 plan, South Korea partnered with NVIDIA to deploy over 260,000 GPUs across sovereign clouds, and the U.S. Stargate initiative represents a USD 500 billion investment in national AI capacity. These programs create substantial, policy-backed demand for GPUaaS deployments.

- Rapid advancement in GPU architectures: NVIDIA’s progression from Hopper (H100/H200) to Blackwell (GB200/GB300) and announced Rubin (2026) and Feynman (2028) platforms delivers generational performance leaps that expand the addressable workload spectrum. Each architecture generation enables new use cases—from agentic AI to real-time reasoning models—that drive incremental GPUaaS consumption.

- Cloud gaming and metaverse acceleration: The gaming and media rendering vertical is growing at over 30% CAGR as platforms like Epic Games’ Unreal Engine 5 leverage cloud-based GPUs for photorealistic virtual productions, and cloud gaming services require elastic GPU resources to deliver low-latency, high-fidelity streaming experiences to end users.

Key Restraints

- GPU supply chain constraints and semiconductor shortages: Global demand for high-end GPUs consistently outpaces manufacturing capacity. Events such as the January 2025 Taiwan earthquake, which damaged TSMC facilities and destroyed over 30,000 high-end wafers, severely disrupted NVIDIA Blackwell production timelines. Supply bottlenecks create allocation challenges for GPUaaS providers, limiting capacity expansion and driving price volatility.

- High energy consumption and data center power limitations: GPU-intensive data centers require substantially more power than traditional cloud facilities, with next-generation racks demanding 130kW+ per rack for liquid-cooled GPU clusters. The International Energy Agency projects global data center energy consumption to exceed 945 TWh by 2030, and power availability is becoming a primary bottleneck for GPUaaS expansion in constrained grid environments.

- Vendor lock-in and NVIDIA dependency: NVIDIA commands over 90% of the data center GPU market, creating concentration risk for GPUaaS providers and enterprise customers. The ecosystem’s reliance on CUDA software, InfiniBand networking, and NVIDIA-specific architectures limits portability across providers and raises strategic vulnerability concerns.

- Emergence of custom AI accelerators (ASICs): Hyperscalers including Alphabet (TPUs), Amazon (Trainium/Inferentia), and Microsoft (Maia) are developing proprietary AI chips that could divert workloads from GPU-based cloud instances over the medium term, potentially reducing hyperscaler reliance on third-party GPUaaS for internal AI workloads.

Key Trends

- Rise of neocloud providers: Specialized GPU cloud companies such as CoreWeave, Lambda Labs, Nebius, and Crusoe Cloud have emerged as serious competitors to hyperscalers, collectively raising billions in capital. CoreWeave alone has secured over USD 12 billion in funding, completed a Nasdaq IPO, and signed infrastructure deals with OpenAI (USD 22.4 billion), Meta (USD 14.2 billion), and NVIDIA. Neocloud revenue is projected to surpass USD 250 billion by 2030.

- Shift from AI training to inference dominance: While AI training workloads continue to grow as model developers create larger and more complex models, AI inference demand is accelerating faster as enterprise and consumer AI adoption expands. The growing size of reasoning models—which perform more computation per output—amplifies inference-side GPU consumption, with inferencing expected to command the majority of GPUaaS compute by 2028.

- Liquid cooling as standard infrastructure: Next-generation GPU deployments (Blackwell GB300, Rubin) require liquid cooling as air cooling cannot dissipate heat from 130kW+ racks. Data center operators are retrofitting facilities with direct-to-chip and immersion cooling systems, and new builds are designed around liquid cooling from foundation to roof, establishing a new baseline for GPUaaS facility design.

- Sovereign AI and data residency requirements: Geopolitical tensions and data privacy regulations (EU AI Act, GDPR, sector-specific mandates) are driving demand for domestically hosted GPU infrastructure. Sovereign cloud programs across Europe, Asia-Pacific, and the Middle East are creating new regional GPUaaS markets and favoring providers with local data center presence and jurisdictional compliance.

Market Segmentation

The IaaS segment commands the largest market share within GPUaaS, delivering raw virtual machine instances and bare-metal GPU servers that provide enterprises with direct access to GPU hardware resources. AWS EC2 GPU instances, Azure ND/NC-series VMs, and Google Cloud GPU VMs represent the hyperscaler IaaS tier, while neoclouds like CoreWeave deliver Kubernetes-native bare-metal access that eliminates hypervisor overhead. The IaaS model appeals to organizations with mature AI/ML engineering teams capable of managing their own software stacks and orchestration frameworks.

The PaaS segment is the fastest-growing service model, expanding at over 30% CAGR as managed AI environments gain traction among enterprises seeking to accelerate development without deep infrastructure expertise. Platforms such as Amazon SageMaker, Azure Machine Learning, Google Vertex AI, and Rafay’s GPU PaaS integrate cluster scheduling, cost governance, ML-specific observability, and root-level CUDA access within managed environments. PaaS offerings reduce the DevOps burden on AI teams, enabling faster time-to-model and more efficient resource utilization.

SaaS-based GPU offerings provide turnkey inference endpoints and pre-built AI services that hide GPU complexity behind RESTful API calls. Vision classification, speech-to-text, natural language processing, and generative AI endpoints allow developers to consume GPU-accelerated capabilities without managing any infrastructure. This segment accounts for a significant share of 2025 revenue as enterprises embed AI capabilities into applications through API-first approaches, particularly in industries such as e-commerce, customer service, and content moderation.

Public cloud deployment dominates the GPUaaS market with approximately 50% revenue share, driven by its scalability, pay-as-you-go economics, and rapid provisioning capabilities. Hyperscalers and neoclouds alike deliver GPU instances through multi-tenant public cloud environments that enable burst computing for training runs and auto-scaling for inference workloads. Public cloud is the preferred model for startups, SMEs, and research institutions that require flexible access without long-term commitments.

Private cloud GPU deployments serve enterprises with stringent data security, regulatory compliance, and intellectual property protection requirements. Financial institutions, defense agencies, and pharmaceutical companies deploy dedicated GPU clusters within isolated cloud environments to maintain full control over data and infrastructure. NVIDIA DGX Cloud and enterprise-grade offerings from hyperscalers provide managed private cloud GPU solutions that combine dedicated hardware with cloud-like operational simplicity.

Hybrid cloud is the fastest-growing deployment model, enabling organizations to balance on-premises GPU infrastructure for sensitive data processing with public cloud GPU resources for scalable training workloads. Healthcare organizations leveraging hybrid architectures can process patient data locally while utilizing cloud GPUs for AI model training. NVIDIA’s AI Enterprise platform enables seamless workload deployment across hybrid environments, and enterprises are increasingly adopting multi-cloud GPU strategies to optimize cost, performance, and vendor diversification.

Large enterprises account for the majority of GPUaaS revenue, securing reserved-capacity contracts and negotiating multi-year GPU allocation commitments. Multinational banks, automotive OEMs, pharmaceutical companies, and technology conglomerates lock in dedicated GPU clusters for predictable AI roadmaps, often establishing data center colocation arrangements or direct supply guarantees with providers. The large enterprise segment benefits from volume-based pricing and priority access to next-generation GPU architectures.

The SME segment is growing at approximately 29% CAGR, driven by the democratizing effect of consumption-based billing and serverless GPU offerings that eliminate the need for DevOps headcount. Startups in fintech, e-commerce, healthtech, and SaaS are leveraging GPUaaS to integrate vision models, recommendation engines, and generative AI capabilities into products within days. Competitive pricing at sub-USD 1.00/hour for mid-range instances further lowers entry barriers, enabling lean teams to access enterprise-grade GPU compute.

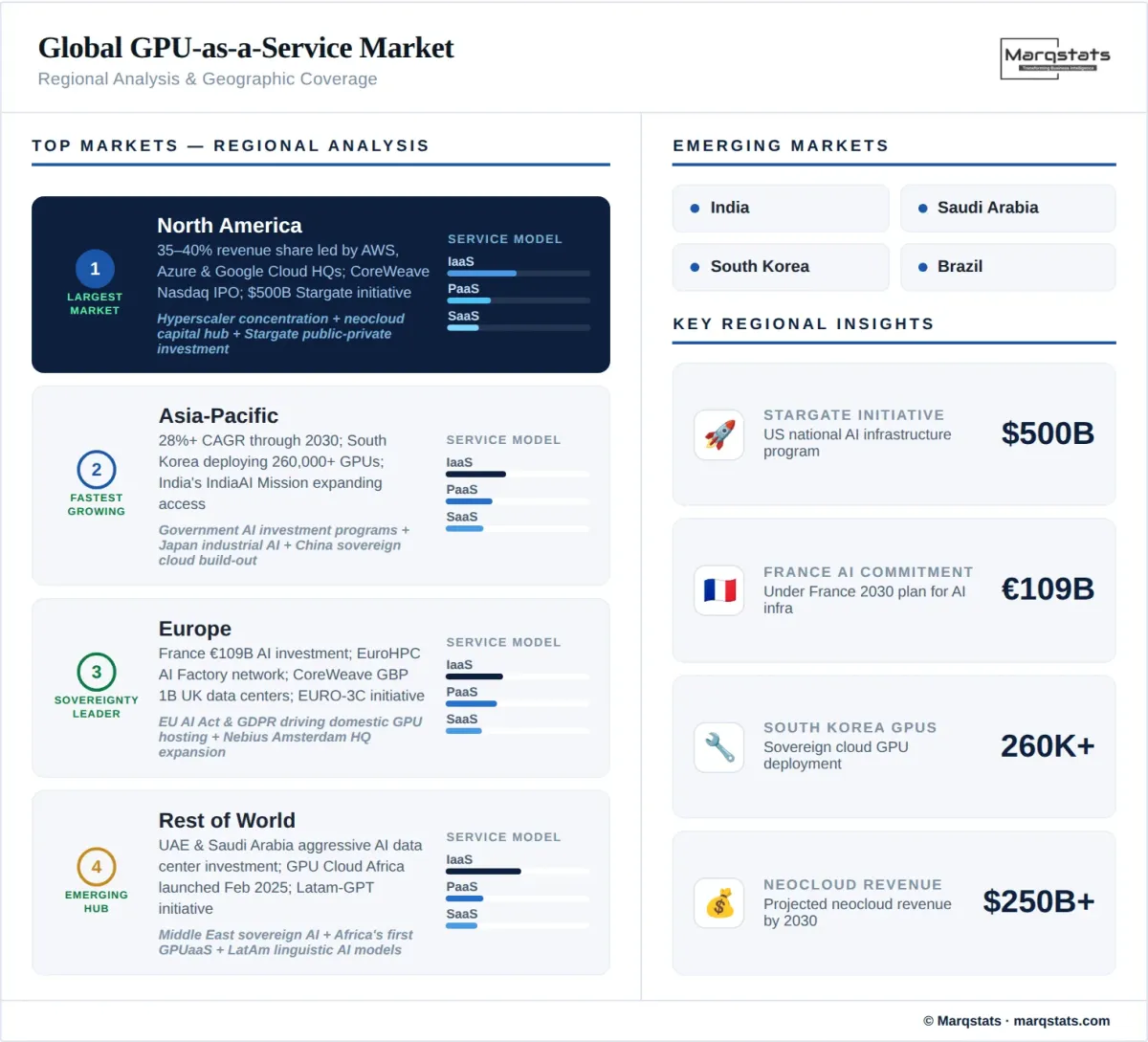

By Geography

North America

North America commands the largest share of the global GPUaaS market at approximately 35–40% of revenue, underpinned by the region’s advanced technology ecosystem, concentration of hyperscaler headquarters, and pioneering AI adoption across industries. The United States drives the overwhelming majority of regional demand, home to AWS, Microsoft Azure, Google Cloud, and Oracle Cloud, as well as leading neoclouds CoreWeave, Lambda Labs, and Crusoe Cloud. The January 2025 Stargate initiative—a USD 500 billion national AI infrastructure program involving NVIDIA, OpenAI, Microsoft, and Oracle—represents the most ambitious public-private compute investment in history. CoreWeave’s March 2025 Nasdaq IPO and subsequent USD 2 billion NVIDIA investment in January 2026 further cemented the region’s dominance. Canada contributes through expanding AI research hubs in Toronto and Montreal, supported by federal AI investment programs.

Europe

Europe represents a significant and rapidly evolving GPUaaS market, shaped by the dual imperatives of AI competitiveness and data sovereignty. The EU AI Act and GDPR create a regulatory framework that favors domestically hosted GPU infrastructure, driving demand for sovereign cloud solutions. France leads European investment with EUR 109 billion committed to AI under its France 2030 plan, including partnerships with FluidStack to build a 500,000-chip AI supercomputer. The EuroHPC Joint Undertaking is expanding its AI Factory network of GPU-equipped supercomputers accessible to startups and universities. Germany’s T-Systems Open Telekom Cloud, OVHcloud’s sovereign approach, and Nebius’s Amsterdam-headquartered European expansion reflect the region’s commitment to building independent GPU capacity. The United Kingdom has attracted significant neocloud investment, with CoreWeave committing GBP 1 billion to UK data centers powered by NVIDIA H200 GPUs. The EU’s EURO-3C federated cloud initiative, announced at Mobile World Congress in March 2026, represents a coordinated effort to reduce reliance on U.S. and Chinese technology providers.

Asia-Pacific

Asia-Pacific is the fastest-growing regional GPUaaS market, expanding at over 28% CAGR through 2030. China, India, Japan, and South Korea lead regional adoption, supported by massive government AI investment programs. South Korea partnered with NVIDIA and domestic companies in late 2025 to deploy over 260,000 GPUs across sovereign clouds and AI factories, with SK Telecom’s Haein GPU cluster supporting the national Sovereign AI Foundation Model Project. India’s IndiaAI Mission and growing domestic GPUaaS ecosystem—including partnerships between Radian Arc and GTPL Broadband for GPU edge infrastructure deployment—are accelerating access to AI compute for enterprises across the subcontinent. Japan’s strong semiconductor heritage and industrial AI adoption in automotive, robotics, and manufacturing drive significant GPUaaS demand. The region’s combination of large-scale government programs, rapidly growing enterprise AI adoption, and emerging domestic GPU cloud providers positions Asia-Pacific as a critical growth engine for the global GPUaaS market.

Rest of World

Emerging markets across the Middle East, Latin America, and Africa are posting strong GPUaaS growth as infrastructure development and local AI ecosystems mature. The UAE and Saudi Arabia are investing aggressively in AI data centers, with NVIDIA partnerships supporting regional sovereign AI initiatives. In Africa, ST Digital launched GPU Cloud Africa in February 2025 as the continent’s pioneering GPUaaS platform, signaling the beginning of localized GPU compute availability. Latin American initiatives including Latam-GPT reflect the region’s ambition to develop culturally and linguistically appropriate AI models on sovereign infrastructure. While these regions currently represent a small share of global GPUaaS revenue, their double-digit growth rates indicate significant long-term potential.

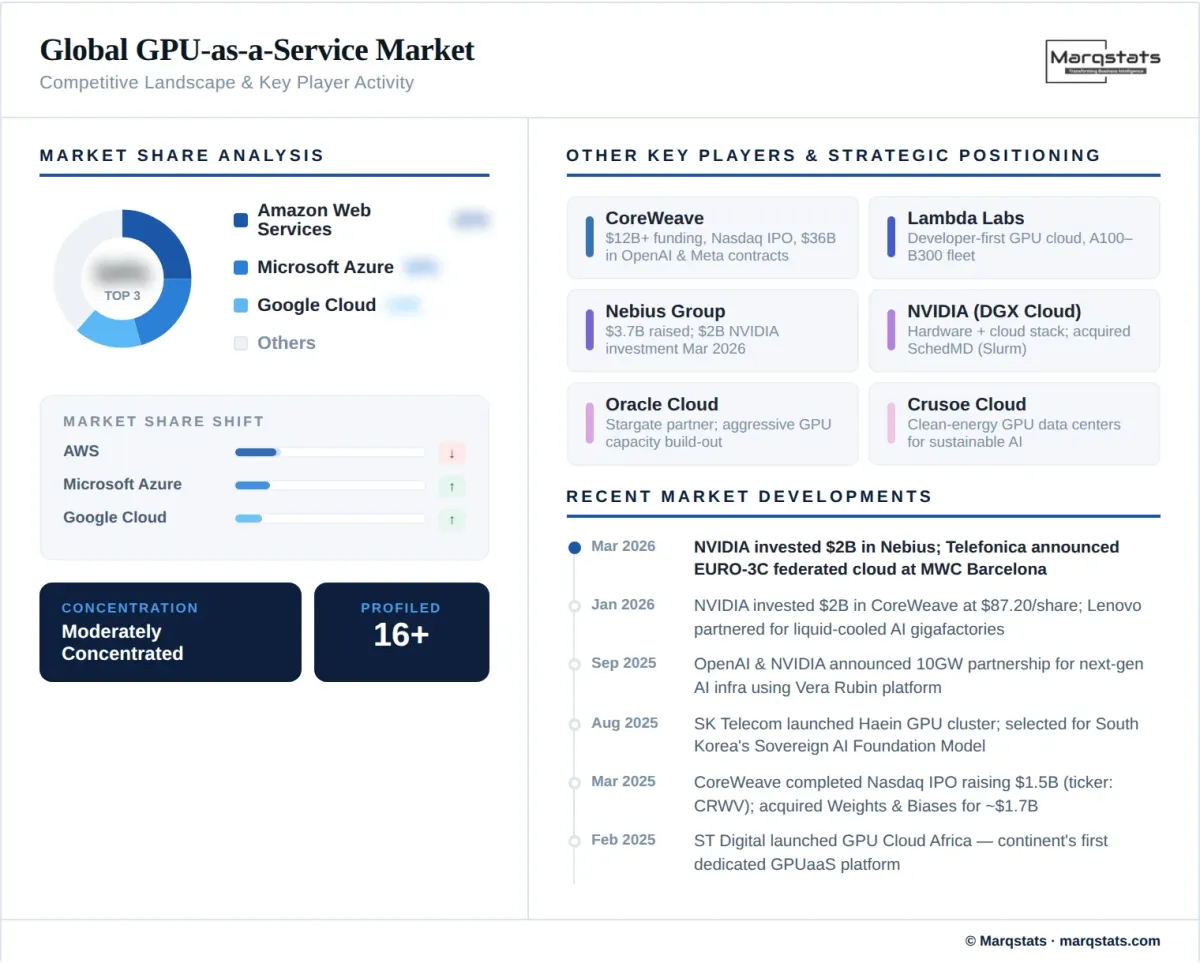

How Competition Is Evolving

The GPUaaS market operates as a moderately concentrated ecosystem, with the top five hyperscalers—Amazon Web Services, Microsoft Azure, Google Cloud, IBM Cloud, and Oracle Cloud—collectively commanding approximately 54–62% of total market revenue through 2025. These incumbents leverage their global data center footprints, established enterprise relationships, comprehensive service portfolios, and bundled AI platform offerings (SageMaker, Azure Machine Learning, Vertex AI) to maintain dominant positions. However, hyperscaler market share is projected to decline from 76% in 2024 to approximately 63% by 2030 as specialized neocloud providers capture an expanding portion of GPU-intensive workloads.

The neocloud insurgency represents the most transformative competitive dynamic in the market. CoreWeave has emerged as the undisputed neocloud leader, with over USD 12 billion in total funding, a March 2025 Nasdaq IPO (ticker: CRWV), a USD 2 billion NVIDIA investment in January 2026, and infrastructure contracts totaling over USD 36 billion with OpenAI and Meta. Lambda Labs competes on developer experience and transparent pricing, maintaining a broad GPU fleet from A100 to B300 architectures. Nebius, headquartered in Amsterdam, raised USD 1.7 billion in combined funding (2024–2025) and secured a USD 2 billion NVIDIA strategic investment in March 2026, positioning itself to capture European sovereign AI demand. Competition is intensifying across pricing, GPU availability speed, networking performance (InfiniBand vs. Ethernet), and vertical specialization.

Strategic M&A activity is reshaping the landscape. CoreWeave’s acquisition of AI developer platform Weights & Biases for approximately USD 1.7 billion and physics AI firm Monolith AI, combined with its agreement to acquire Core Scientific, signals a push toward full-stack AI cloud platforms that integrate infrastructure with developer tooling and workload orchestration. NVIDIA’s December 2025 acquisition of SchedMD (developer of the Slurm workload manager) extended its reach deeper into the AI software stack. Telecoms operators including SK Telecom, Deutsche Telekom, and approximately 30 others globally have launched GPUaaS offerings, targeting sovereign AI demand and edge compute use cases, though telecoms GPUaaS revenue remains nascent at under USD 100 million in 2024.

Companies Covered

The report profiles 16+ companies with full strategy and financials analysis, including:

Recent Market Activity

Table of Contents

Coverage & Segmentation

This report provides a comprehensive analysis of the global GPU-as-a-Service (GPUaaS) market covering the historical period (2021–2025), base year (2025), and forecast period (2026–2030). The study examines market size estimations and growth projections in USD billion, segmented by service model (IaaS, PaaS, SaaS), deployment model (public cloud, private cloud, hybrid cloud), GPU type (high-end, mid-range, entry-level), enterprise size (large enterprises, SMEs), end-user vertical (BFSI, healthcare, IT and telecommunications, automotive, gaming, media and entertainment, and others), and geography (North America, Europe, Asia-Pacific, and Rest of World). The competitive landscape analysis profiles hyperscalers, neocloud providers, hardware-led offerings, and telecoms-operated GPUaaS platforms.

The research methodology combines bottom-up market sizing validated against top-down estimates, drawing from cloud provider financial disclosures, GPU shipment data, enterprise AI adoption surveys, and data center capacity analyses. Primary research includes consultations with cloud infrastructure executives, AI platform architects, enterprise IT decision-makers, and GPU semiconductor analysts. Secondary sources include cloud provider earnings reports, semiconductor industry databases, government AI policy documents, data center energy consumption projections from the International Energy Agency, and trade publications covering cloud computing and AI infrastructure markets.