Market Snapshot

Key Takeaways

Market Overview & Analysis

Report Summary

The automotive AI box market encompasses three overlapping product layers: (1) aftermarket/installed-base AI boxes that upgrade older IVI (in-vehicle infotainment) systems or lower-end vehicles with AI features and smoother cockpit performance, typically via USB-connected add-ons; (2) OEM supplemental AI boxes that sit beside an existing cockpit platform to add large-model capability without redesigning the full E/E stack; and (3) AI-box-equivalent compute nodes that blur into cross-domain or central compute modules, as seen in NIO’s N-Box, ECARX cockpit/ADAS platforms, and emerging central vehicle computers. This report explicitly distinguishes automotive-grade AI boxes (edge AI compute modules with safety certification) from consumer “Android AI boxes” (CarPlay/Android Auto dongles) which serve a different market and use case. The market scope covers hardware modules, embedded software/middleware, AI toolchains, and integration services across passenger vehicles and commercial vehicles globally.

The broader automotive AI market is projected to grow from approximately USD 5–19 billion in 2025 (depending on scope definition) to USD 15–38 billion by 2030 across multiple analyst estimates. The automotive AI box market represents the edge compute hardware and middleware layer within this ecosystem—the physical and software infrastructure that enables local AI inference, model scheduling, and mixed-criticality workload execution inside the vehicle. The market’s commercial logic sits at the intersection of two forces: a short-cycle retrofit/supplement opportunity (adding AI capability to existing and mid-tier vehicles quickly) and a long-cycle architectural consolidation trend (migration toward centralised SDV platforms that subsume standalone boxes). Automotive software and electronics are transitioning to zonal and central computing architectures that enable OTA updates, connectivity, and GenAI integration—a transformation estimated to reach USD 519 billion by 2035 for the total automotive software and electronics ecosystem.

Market Dynamics

Key Drivers

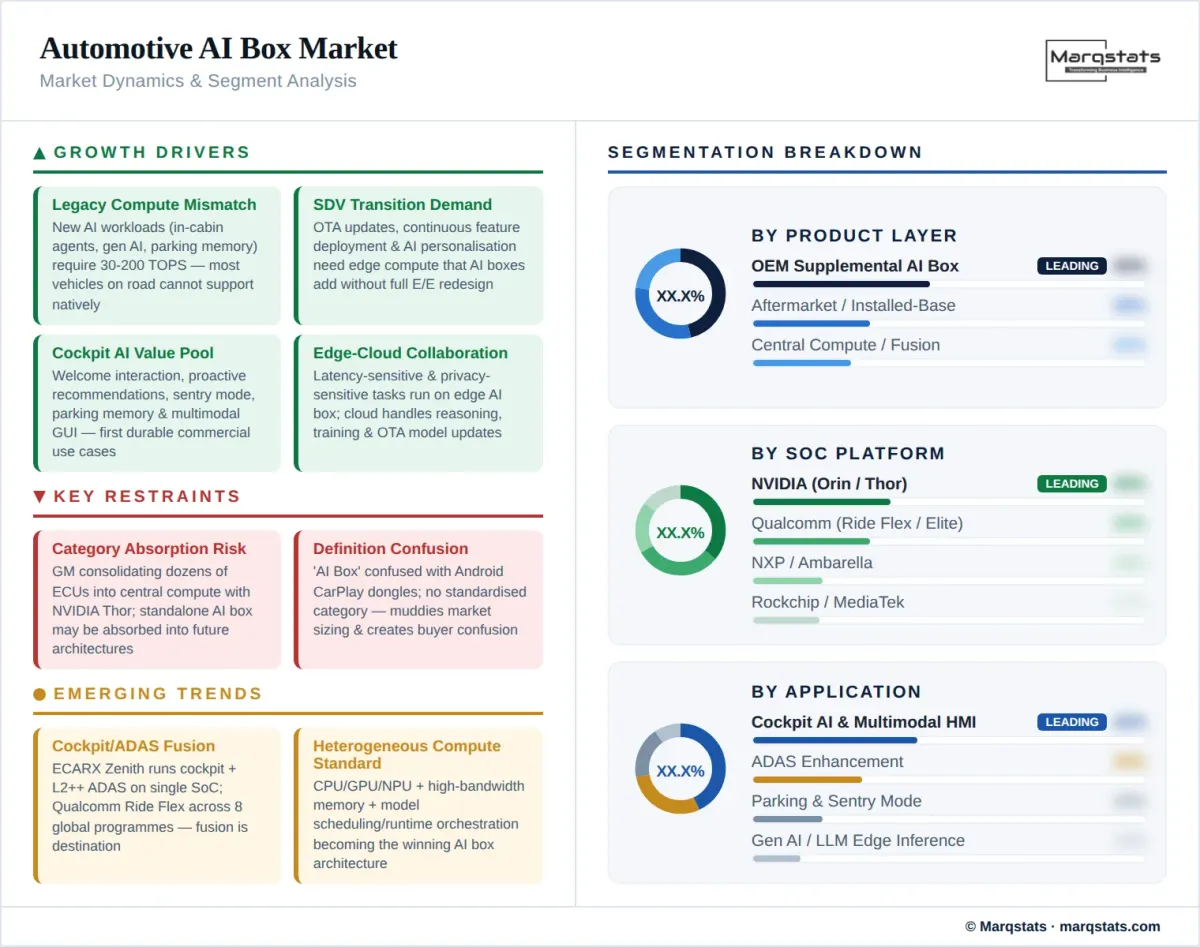

- Mismatch between new AI workloads and legacy in-vehicle compute: The immediate demand driver is that older vehicle hardware cannot support newer AI features such as in-cabin agents, generative AI assistants, complex multimodal scenarios, and real-time parking memory. Automotive AI is increasingly split into edge-cloud collaboration, where edge handles latency-sensitive, privacy-sensitive, and real-time tasks while the cloud handles heavier reasoning, model optimisation, and data analysis. This creates a structural opening for supplemental edge compute modules—AI boxes—that can be added to existing platforms without full E/E redesign.

- Software-defined vehicle (SDV) transition accelerating demand for edge AI hardware: The automotive industry is moving toward SDV architectures with OTA updates, continuous feature deployment, and AI-powered personalisation. Future E/E architectures are shifting away from many domain-specific ECUs toward a few powerful vehicle computers connected through zone ECUs. The SDV AI box serves as the transitional hardware layer that enables OEMs to deploy AI features on vehicles that were designed before SDV architectures existed, while future platforms absorb this capability into centralised compute.

- Cockpit AI and multimodal HMI creating the first durable value pool: The first commercially viable use cases are cockpit-first, not robotaxi-first: welcome interaction, proactive recommendation, enhanced sentry mode, high-precision parking memory (HPA), and GUI interaction. These workloads can share compute and justify the bill of materials faster than standalone edge-LLM products. NXP highlights ADAS, infotainment, in-cabin sensing, audio intelligence, vision, predictive maintenance, and personalised driver experiences for edge AI. This cockpit-centric value proposition drives near-term attach rates.

- China’s rapid commercialisation creating proof points and scale: China is the earliest and most explicit commercial test bed for the “AI Box” label. ThunderSoft’s Geely/NVIDIA AIBOX debuted at IAA Mobility in September 2025 as an industry-first mass-production solution. ADAYO and BICV are important in the China OEM supplement market. NIO’s N-Box demonstrates how AI-box-equivalent compute nodes are being embedded in premium EV architectures. Huawei’s HMS for Car platform deploys AI BOX as one of four core components (MAP BOX, Service Box, AI BOX, Net BOX) across Chinese brands like Chery, Great Wall Motor, and Changan—including international expansion at BIMS 2026 in Thailand.

- Automotive AI hardware growing at fastest CAGR (27.1%) within the broader AI chip market: The automotive sector represents the fastest-growing vertical for AI hardware according to multiple industry estimates, driven by the combination of safety mandates, ADAS proliferation, connected-car growth, and the electrification wave that makes vehicles more software-intensive. This creates a secular tailwind for edge compute modules across all three product layers of the automotive AI box market.

Key Restraints

- Category absorption risk as central compute and cockpit/ADAS fusion mature: The long-term destination architecture is not many separate AI boxes but fewer, more powerful centralised compute nodes. GM announced in October 2025 that its next architecture would consolidate dozens of ECUs into a unified central compute core with NVIDIA Thor. Qualcomm’s Ride Flex was commercialised across eight global programs by January 2026. ECARX’s Zenith platform runs cockpit and L2++ ADAS together on a single SoC. As these centralised platforms mature, the standalone AI box category risks being absorbed—meaning vendors must position as architecture components, not standalone products.

- Lack of standardised category definition creating market confusion: “AI Box” is less standardised than terms like cockpit domain controller, ADAS domain controller, or central vehicle computer. Outside China, the same economic function is described as “centralised computing platform,” “cockpit/ADAS fusion,” or “vehicle computer.” Consumer confusion with “Android AI box” (CarPlay dongles) further muddies the search landscape. This definitional looseness constrains market sizing precision and creates buyer confusion between aftermarket entertainment upgrades and automotive-grade edge AI compute.

- Safety certification and mixed-criticality compliance barriers: Any AI box running safety-relevant workloads alongside infotainment must clear ISO 26262 functional safety (ASIL D), ISO/SAE 21434 cybersecurity, UNECE R155/R156 compliance, and Automotive SPICE process maturity. NVIDIA says DriveOS 6.0 is ASIL D conformant with ISO/SAE 21434 process certification. QNX Cabin is pitched as ISO 26262 ASIL D-certified for mixed-criticality systems. These compliance requirements create significant barriers for new entrants and increase time-to-market.

Key Trends

- Cockpit/ADAS fusion emerging as the dominant architecture direction: ECARX’s Antora 1000 SPB (April 2025) integrated cockpit, driving, and parking on a single platform. The Zenith platform on Qualcomm’s Snapdragon Elite Automotive (February 2026) runs Android 16/GAS, safety-critical cluster functions on QNX, and L2++ ADAS together on a single SoC. Qualcomm’s Leapmotor solution unifies cockpit, driver assistance, body control, and connectivity on one system. This fusion trend means AI box vendors must evolve from “box with extra silicon” to “platform with orchestration, middleware, and mixed-criticality execution.”

- Edge-cloud collaboration model defining AI deployment architecture: Automotive AI increasingly splits between edge (latency-sensitive, privacy-sensitive, real-time inference) and cloud (heavier reasoning, model training, data analytics, OTA model updates). The AI box is the edge node in this architecture. ThunderSoft’s AIBOX highlights compute allocation, model scheduling, and scenario adaptation for millisecond-level multimodal response. This edge-cloud architecture means AI box ASP is driven not by the enclosure alone but by the combined value of SoC, thermal design, memory bandwidth, middleware, safety software, and OEM integration scope.

- Heterogeneous compute becoming the standard AI box architecture: The winning product configuration is heterogeneous computing across CPU/GPU/NPU, plus high-bandwidth memory, model scheduling/runtime orchestration, safety-grade OS/hypervisor for mixed workloads, and a toolchain for model porting, quantisation, and OTA updating. NXP’s S32/CoreRide supports edge intelligence across central compute, zone controller, and domain controller use cases. Qualcomm’s Ride Flex handles mixed-criticality workloads on the same hardware. Ambarella positions AI domain controllers for L2+ through L4 with low-power, perception-heavy architectures.

- OEMs bringing central compute in-house, changing supplier dynamics: OEMs are not passive module buyers. GM is bringing central compute in-house at the platform level with NVIDIA Thor. Leapmotor and Qualcomm announced a central-compute solution unifying cockpit, driver assistance, body control, and connectivity. Multiple automakers are partnering with NVIDIA for future driver-assistance systems. This means AI box suppliers must sell not just a module but a roadmap-compatible architecture component that fits into the OEM’s long-term platform strategy.

Market Segmentation

The entry layer of the market: USB-connected or interface-connected modules that upgrade older IVI systems with AI features, smoother cockpit performance, and connectivity enhancements. This segment already has meaningful scale and is mainly used to solve IVI lag, outdated feature versions, and insufficient AI capability on vehicles that cannot receive platform-level upgrades. Solutions often integrate HiCar or CarPlay interconnection. SoC platforms from Rockchip (RK3588S), MediaTek (Dimensity Auto), and Qualcomm (Snapdragon SA-series) power this tier. Key distinction from consumer “Android AI boxes”: automotive aftermarket AI boxes typically meet automotive temperature, vibration, and EMC requirements, though certification depth varies significantly by supplier.

The core growth driver: modules designed by Tier 1s or platform integrators that sit beside an existing cockpit domain controller to add large-model capability without full E/E redesign. ThunderSoft’s Geely/NVIDIA AIBOX is the clearest public example—debuted at IAA Mobility September 2025 as an industry-first solution bringing large AI models into mass-production vehicles. ADAYO and BICV serve as OEM supplemental providers in China. Huawei’s AI BOX component within HMS for Car powers Chinese brands’ smart cockpit features globally. This tier typically operates at 30–200 TOPS with heterogeneous CPU/GPU/NPU compute, and represents the segment with the clearest near-term revenue growth.

The convergence tier where the AI box function blurs into cross-domain or central vehicle computers. NIO’s N-Box is an explicit example of a heterogeneous compute node that serves as the vehicle’s AI brain. ECARX’s Zenith platform combines cockpit, driving, and parking on a single SoC. GM’s next architecture consolidates dozens of ECUs into a unified NVIDIA Thor-based central compute core. This tier represents the long-term destination but is already generating near-term revenue as premium OEMs deploy centralised platforms. The category boundary between “AI box” and “central vehicle computer” is intentionally fluid at this tier.

The dominant near-term application: AI-powered in-cabin interaction including natural language processing, generative AI assistants, personalised recommendations, emotion recognition, gesture control, and advanced GUI rendering. ThunderSoft’s AIBOX handles compute allocation, model scheduling, and scenario adaptation for millisecond-level multimodal response. This is where the AI box’s value proposition is strongest today because cockpit AI workloads can be deployed incrementally on existing vehicle platforms.

AI boxes providing supplemental compute for advanced driver-assistance features including high-precision parking memory (HPA), enhanced lane-keeping, adaptive cruise control intelligence, and sensor fusion across camera/radar/lidar inputs. Mobileye’s in-cabin sensing runs alongside road perception on the same chip. Qualcomm’s Ride Flex and Ride Elite combine cockpit and ADAS on shared silicon. This application drives the convergence trend toward cockpit/ADAS fusion architectures.

Edge AI compute for commercial fleet applications including predictive maintenance, real-time route optimisation, driver behaviour monitoring, and fleet-wide model learning. The vehicle telematics application is expected to grow at the highest CAGR in the broader automotive AI market. AI boxes serving commercial vehicles enable edge inference for fleet-specific workloads that benefit from local processing due to connectivity gaps and latency requirements.

By Geography

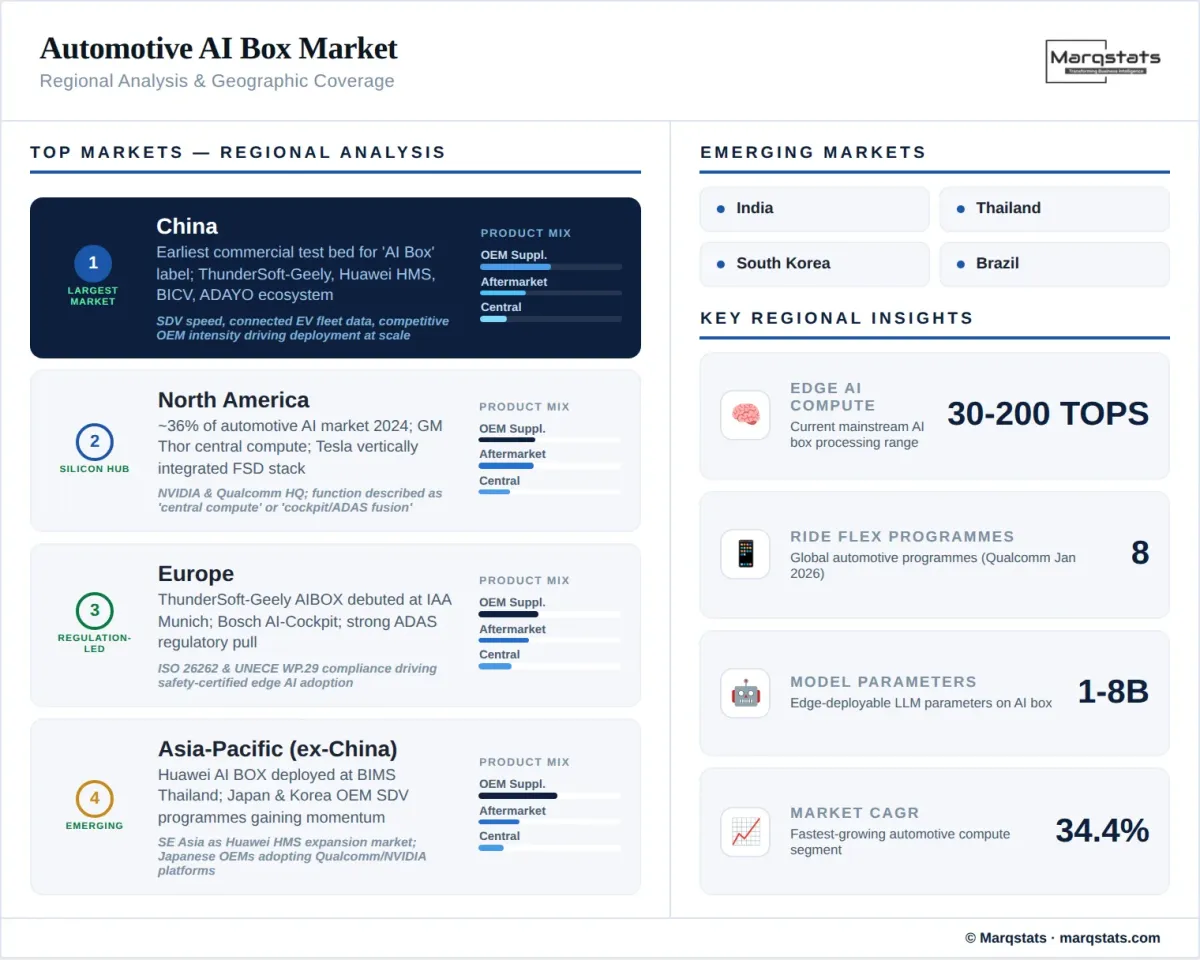

China

China leads the transitional productisation of the automotive AI box market and is the earliest and most explicit commercial test bed for the “AI Box” label. The vendor and case-study landscape is dominated by Chinese suppliers: ThunderSoft (AIBOX with Geely/NVIDIA), BICV, ADAYO, Banma, and Dongfeng Honda’s JV context. NIO’s N-Box demonstrates premium-EV AI compute integration. Huawei’s AI BOX within HMS for Car powers Chinese brands (Chery, GWM, Changan) both domestically and internationally, including BIMS 2026 deployment in Thailand. China’s SDV ecosystem’s speed, its large fleet of connected EVs generating training data, and the competitive intensity among Chinese OEMs on in-cabin AI features create a uniquely fertile environment for AI box deployment at scale.

North America

North America accounted for approximately 36% of the broader automotive AI market in 2024. However, the AI box function is more commonly described as “central vehicle computer” or “cockpit/ADAS fusion” rather than “AI box.” GM’s October 2025 announcement of a liquid-cooled central compute unit with NVIDIA Thor at the heart and zone aggregators is the clearest architectural signal. Tesla’s data advantage and vertically integrated AI compute stack set a different template where the AI capability is embedded, not supplemented. Qualcomm’s headquarters in San Diego and NVIDIA in Santa Clara anchor the silicon supply chain. The market opportunity is more in OEM-integrated central compute than aftermarket AI box retrofit.

Europe

Europe maintains strict data-privacy rules (GDPR) that amplify demand for edge inference—making the AI box’s local-processing capability particularly relevant for privacy-preserving AI features. Bosch describes future E/E architectures as zone-oriented with a few vehicle computers. ThunderSoft debuted AIBOX at IAA Mobility in Munich (September 2025). UNECE R155 (cybersecurity) and R156 (software updates) create compliance requirements that AI box vendors must satisfy. BMW’s integration of DeepSeek AI in China and Volkswagen’s OTA deployment of Cerence Chat Pro across European vehicles demonstrate European OEMs’ AI deployment strategies, though often without the standalone “AI box” label.

Asia-Pacific (excluding China)

Japan and South Korea represent the emerging opportunity. Huawei’s HMS for Car expansion to Thailand via BIMS 2026 signals Southeast Asian market development. Japanese OEMs (Toyota, Honda, Nissan) have formed a semiconductor consortium to address domestic AI shortages. South Korea’s Hyundai is investing KRW 7 trillion in self-driving logistics corridors. The region’s automotive AI market records the fastest growth worldwide, driven by EV leadership and comparatively supportive regulatory environments.

How Competition Is Evolving

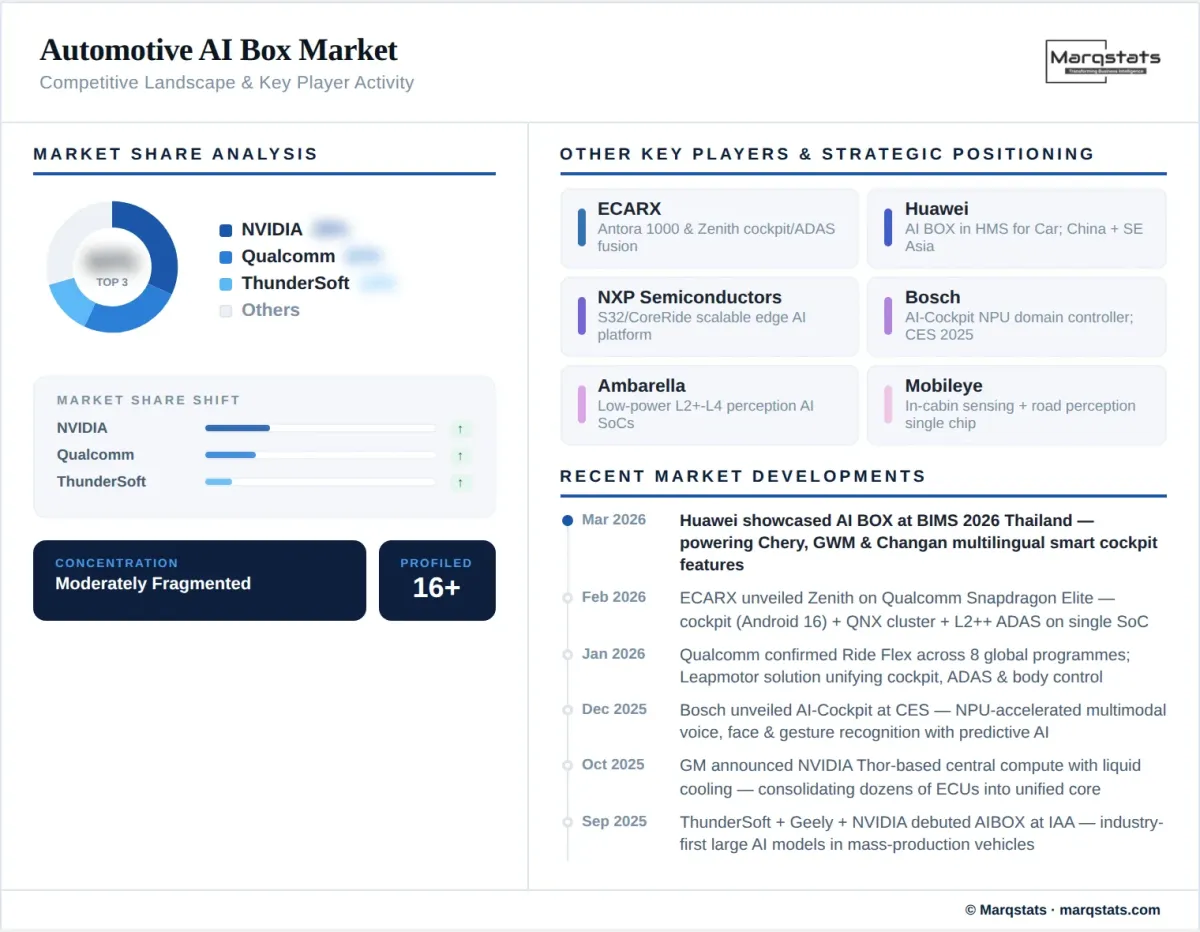

The automotive AI box market’s competitive stack breaks into four layers. First, silicon/compute platform vendors: NVIDIA (DRIVE platform, DriveOS, Thor/Orin for complex in-vehicle AI), Qualcomm (Ride Flex, Ride Elite, Snapdragon Elite Automotive for cockpit/ADAS fusion), NXP (S32/CoreRide for scalable edge AI from domain to centralised compute), Ambarella (AI domain controllers for L2+–L4 with low-power perception focus), and emerging players including Rockchip (RK3588S for entry/aftermarket), MediaTek (Dimensity Auto), and AMD (Versal adaptive SoC for automotive edge).

Second, module/platform integrators: ThunderSoft is the clearest “AI Box” leader globally, especially through its Geely/NVIDIA AIBOX at IAA 2025. ADAYO and BICV are important in China’s OEM supplement market. ECARX bridges chips, computing platforms, and software while moving from cockpit into cross-domain central compute (Antora 1000 SPB, Zenith). Bosch remains a major global systems integrator but leans toward vehicle computers and zonal architectures. Huawei’s AI BOX within HMS for Car represents the ecosystem-integrator model spanning maps, services, AI, and connectivity.

Third, OS/middleware/toolchain: DriveOS (NVIDIA), QNX (BlackBerry, ISO 26262 ASIL D certified), Android Automotive/GAS, NXP eIQ Auto/CoreRide, and supplier-specific orchestration layers determine whether a module can be homologated, updated, and scaled across programmes. Fourth, OEMs/platform owners: GM (central compute in-house with NVIDIA Thor), NIO (N-Box as integrated AI compute node), Leapmotor (Qualcomm central-compute unification), and multiple automakers partnering with NVIDIA mean suppliers must sell roadmap-compatible architecture components, not just standalone modules.

Companies Covered

The report profiles 20+ companies with full strategy and financials analysis, including:

Recent Market Activity

Table of Contents

Coverage & Segmentation

This report provides a comprehensive analysis of the global automotive AI box market covering the historical period (2021–2025) and forecast period (2026–2030), with 2025 as the base year. The study examines market size in USD, unit volume forecasts, TOPS-tier segmentation, and growth trends across product layer (aftermarket, OEM supplemental, AI-box-equivalent central compute), application scenario (cockpit intelligence, ADAS enhancement, fleet telematics), compute tier (entry <30 TOPS, mainstream 30–200 TOPS, flagship 200+ TOPS), and geography (China, North America, Europe, Asia-Pacific ex-China). Company profiling covers 20+ players across silicon vendors, module/platform integrators, OS/middleware providers, and OEMs with in-house AI compute programmes. Standards analysis covers ISO 26262, ISO/SAE 21434, UNECE R155/R156, and Automotive SPICE.

Research methodology combines bottom-up unit modelling from OEM deployment announcements, SoC platform shipment estimates, and Tier 1 supplier disclosures, validated against broader automotive AI hardware market sizing. Primary research includes interactions with SoC vendors, Tier 1 integrators, OEM platform architects, and SDV strategy teams. The Marqstats vertical SaaS market report and agentic AI market report provide complementary intelligence on the software-defined and AI-native technology ecosystems driving automotive AI box demand.